What a Platform Owes Itself

Some work anchors a launch post. Most doesn’t. The rewrites, the lifecycle discipline, the observability, the documentation that goes unread until the moment someone needs it — none of it makes for a compelling screenshot. All of it decides, quietly, whether the features you do ship hold together over time.

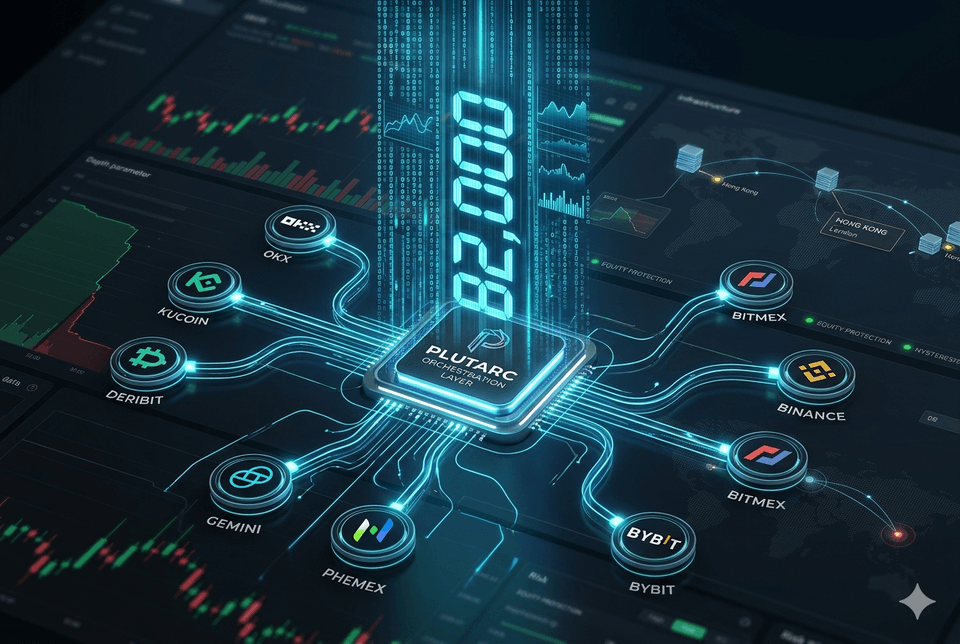

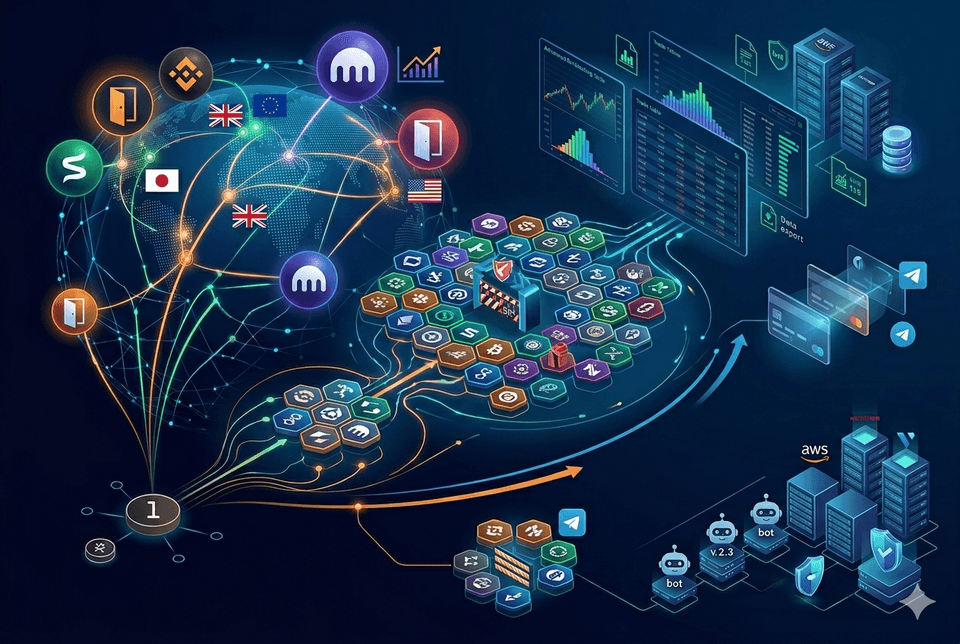

The last post was about what a platform owes the people who use it. This one is about what it owes itself — the internal scaffolding that makes the external promises keepable in the first place. Earlier updates tracked growth outward: more exchanges, more components, more regions. Most of what’s landed since has been the opposite movement — work turned inward, into the foundations that have to hold as the growth accumulates.

plutarc is the accumulation of nearly a decade of practical work in markets — analysing, commenting, narrating, trading. Scalps, swings, arbitrage, the habit of trading both sides of the orderbook manually for the feel of it, a long education in the psychology of how price actually moves. The programming and systems-design history runs further back than that. The internal work I want to write about here is what it takes to turn all of that into something that holds without me in the middle of it.

The Core, Rebuilt

The bot architecture has been rewritten end to end, around a hexagonal core. The original was organised around what the bot did — run strategy, hit exchange, manage position, handle errors along the way — and it worked, in the sense that bots ran. What it didn’t do well was change. Adding a new exchange adapter meant threading integration concerns through the same code that owned the strategy logic. Adding a new order type meant the same. Every addition touched the middle, because the middle was where everything lived.

The new architecture inverts that. Domain logic — strategies, signals, risk — sits in the core, with no knowledge of who it’s talking to. Exchanges, persistence, messaging, and every other external concern sit at the edges, behind interfaces the core defines. Ports and adapters is a well-understood pattern; the value of it isn’t novelty but the thing it enables. Swapping an exchange doesn’t touch the strategy. Adding a risk check doesn’t touch the exchange. The cost of extension is bounded by design, not by luck.

Paired with the rewrite is a full state machine for the bot lifecycle. Bots used to move between states implicitly — a variable flipped, a flag changed, and the behaviour downstream adjusted. That works until it doesn’t: until two subsystems disagree about what state the bot is actually in, or until you need to answer, precisely, what transitions are legal from here. The state machine makes every transition explicit. States are named. Transitions are enumerated. Invalid transitions fail loudly rather than producing ambiguous behaviour. Lifecycle becomes something you can reason about instead of something you deduce from logs after the fact.

The Nervous System

A trading system that fails silently is worse than one that fails loudly. A silent failure accumulates consequence you don’t notice until the account statement arrives. A loud failure interrupts you with information you can act on.

Pino is now wired through the stack. Every meaningful operation produces a structured log line — JSON, queryable, correlated by bot ID and session — so that reconstructing what happened during a specific window is a matter of filtering, not archaeology. Sentry sits alongside it, catching exceptions the moment they surface, with enough context attached to trace the failure back to the code path and, usually, the input that triggered it. The two together mean that what went wrong has an answer before the user has to ask.

CI orchestrates the rest. Nothing reaches production without passing through the same gates — typecheck, lint, tests, schema validation, build — and nothing gets past them silently. The gates aren’t new. What’s new is that they’re consistent, mandatory, and observable. A platform that ships often is only safe if the act of shipping is disciplined enough to be boring.

The Operator Side

A support ticketing system shipped in the last cycle. What’s landed since is the layer that support operators themselves work inside — an employee dashboard with its own role-based access control, its own set of standard operating procedures, and a complete trace audit log of every action taken within it.

The SOPs matter more than they look. Writing down how an operator should triage a ticket, how to respond to specific categories of issue, where to escalate and when, is the kind of document that reads as overhead until the moment you hire someone and realise you have nothing to hand them. The reference material isn’t for the operators who exist. It’s for the operators who will. Written now, while the answers are still fresh and still mine, it costs less than it will later.

The audit log is the part the platform owes itself. Every action an operator takes — ticket accessed, ticket reassigned, internal note added, user data viewed — is recorded against their identity, timestamped, immutable. The purpose isn’t surveillance. It’s that the moment there’s more than one person with access to sensitive operational surfaces, the question who did what needs a structural answer, not a cultural one. A single-operator product that scales to a support team is a different shape than a support team product that collapses to a single operator. The record has to exist before the team does, or the team arrives into a gap that’s already cost something.

Writing for Engineers Who Don’t Exist Yet

The hardest documentation to write is for the developer you haven’t hired. You can’t ask them what they need. You can only imagine the person arriving cold at the repository and try to leave them the artefacts that would have helped you, six months ago, understand your own system.

What’s shipped: schema documentation across the data model, code-level documentation in the places where the why is non-obvious, and a set of development guidelines that codify the decisions shaping the codebase. Why this architecture, not that one. Why this error-handling pattern, not the other. Which deviations are structural and which are incidental. The guidelines are less a style guide than a record of judgements, explained — so that a future engineer who disagrees can do so knowingly, and one who agrees can do so with the reasons attached.

The honest motivation is survival. Solo-founded codebases tend to die at the transition from one builder to a team. Not because the code is bad, but because the context lives in one head and doesn’t transfer. A decade of watching markets and a longer one of building systems is worth very little to the next person if it stays as tacit knowledge in a single head. Documentation is how you externalise what would otherwise evaporate. Writing it now, while the decisions are still warm, is cheaper than reconstructing it later from commit history and memory.

None of this is the kind of work that anchors a launch post. There are no screenshots for a state machine, no demo flow for an audit log, no animated before-and-after for a hexagonal refactor. That’s the point. What a platform owes its users is visible in the features they touch. What it owes itself is visible only in what doesn’t break, doesn’t drift, doesn’t evaporate when the team grows or the founder steps back. The ratio between those two is what determines whether the visible part can actually be trusted.

The external surface gets the attention. The internal one decides whether the attention was warranted.